Introduction

Gas chromatography (GC) calibration is the process of establishing a verified, quantitative relationship between the GC instrument's signal output and the known concentration or mass of analytes in a reference standard, under controlled conditions. Without proper calibration, even high-performance GC instruments cannot produce defensible quantitative results.

This matters directly to analytical chemists, QC/QA professionals, and lab technicians across pharmaceuticals, environmental monitoring, emissions testing, and research labs. The consequences of miscalibration are measurable: an EPA Region 9 investigation documented a laboratory using a calibration curve with a correlation coefficient of 0.9465, below the required 0.99 minimum, resulting in enforcement scrutiny.

Regulatory pressure extends beyond environmental testing. FDA Warning Letters have cited GC calibration failures including incomplete instrument calibration at Dr. Reddy's Laboratories and inadequate controls over GC raw data calibration at Landy International.

TL;DR

- GC calibration covers two layers: hardware verification (instrument calibration) and signal-to-concentration mapping (analytical calibration)

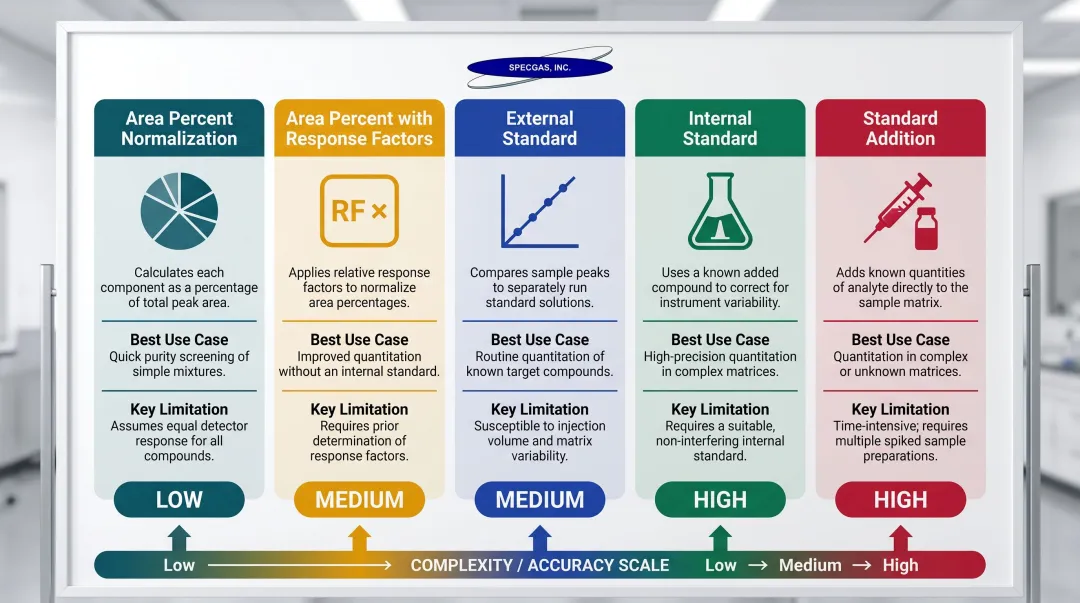

- Five methods are used in practice: area percent normalization, external standard, internal standard, standard addition, and area percent with response factors

- Standard quality determines data reliability — NIST-traceable gases with proper cylinder treatment are essential for reactive components

- SpecGas Inc. produces NIST-traceable calibration gas standards with proprietary cylinder treatment for stable reactive gas mixtures

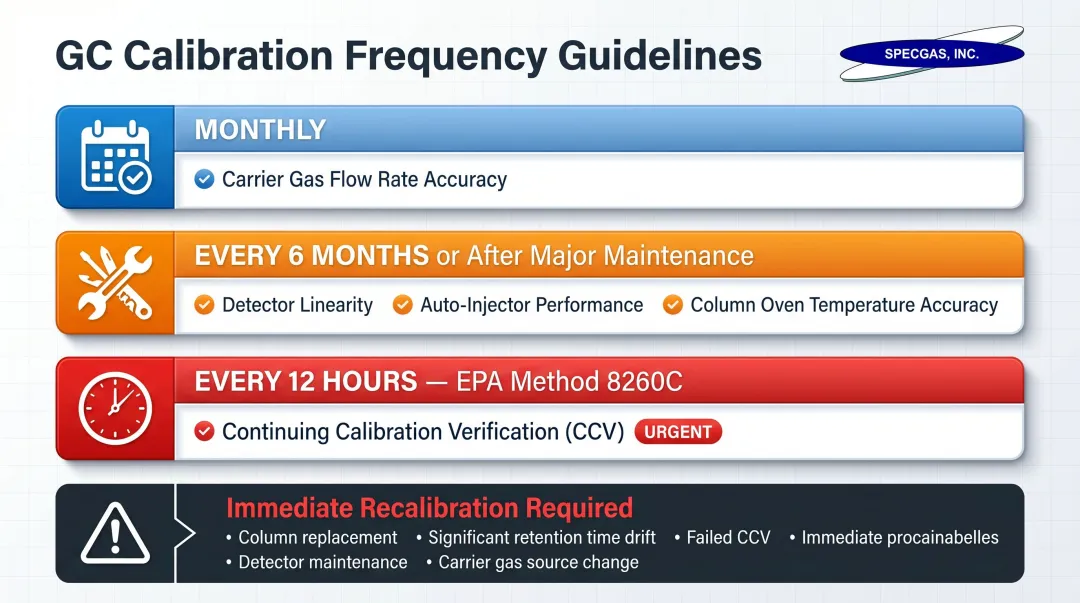

- Calibration frequency depends on the parameter: carrier gas flow monthly, detector linearity and temperature accuracy every six months or after maintenance

What Is GC Calibration?

The International Vocabulary of Basic and General Terms in Metrology (VIM, JCGM 200:2012) defines calibration as "an operation that establishes the relationship between quantity values provided by measurement standards and the corresponding indications of a measuring system, under specified conditions, including evaluation of measurement uncertainty."

Two Distinct Calibration Layers

GC calibration operates on two distinct levels that are often conflated:

Instrument/System Calibration — Verifies that physical hardware functions within specification:

- Column oven temperature accuracy

- Carrier gas flow rate stability

- Detector performance and linearity

Analytical/Methodological Calibration — Generates calibration curves that translate detector signal into concentration or mass data, establishing the mathematical relationship that makes quantitative analysis possible.

How Calibration Differs from System Suitability

System Suitability Testing (SST) confirms the instrument is performing correctly before a specific analysis. ICH Q2(R1) and USP <621> define SST as an integral part of the analytical procedure — verifying that equipment, electronics, analytical operations, and samples form a system fit for use.

In practice, calibration establishes the core measurement relationship, while system suitability confirms that relationship still holds for that day's run. Both are required; neither replaces the other.

Why GC Calibration Matters in Analytical Workflows

A GC system running without verified calibration produces concentration data that has no reliable anchor to reality—which means incorrect batch releases, failed compliance submissions, or research conclusions built on flawed numbers. Regulatory agencies across pharmaceuticals, environmental monitoring, and emissions testing have responded with explicit, enforceable calibration requirements.

Regulatory Frameworks Driving Calibration Requirements

FDA/cGMP Regulations:21 CFR 211.194 mandates that laboratory records include complete data derived from all tests necessary to assure compliance. Equipment must be maintained and calibrated at appropriate intervals per 21 CFR 211.67.

EPA Methods:EPA Method 8260C requires an initial calibration and continuing calibration verification every 12 hours. EPA Method 18 requires pre- and post-test calibration checks with response factor deviations no greater than 5%.

ISO/IEC 17025:2017:Clause 6.5 requires laboratories to establish metrological traceability of measurement results via a documented unbroken chain of calibrations to recognized standards.

Each of these frameworks carries audit and enforcement consequences—making calibration records something regulators will ask for, not just something quality teams file away.

How GC Calibration Works

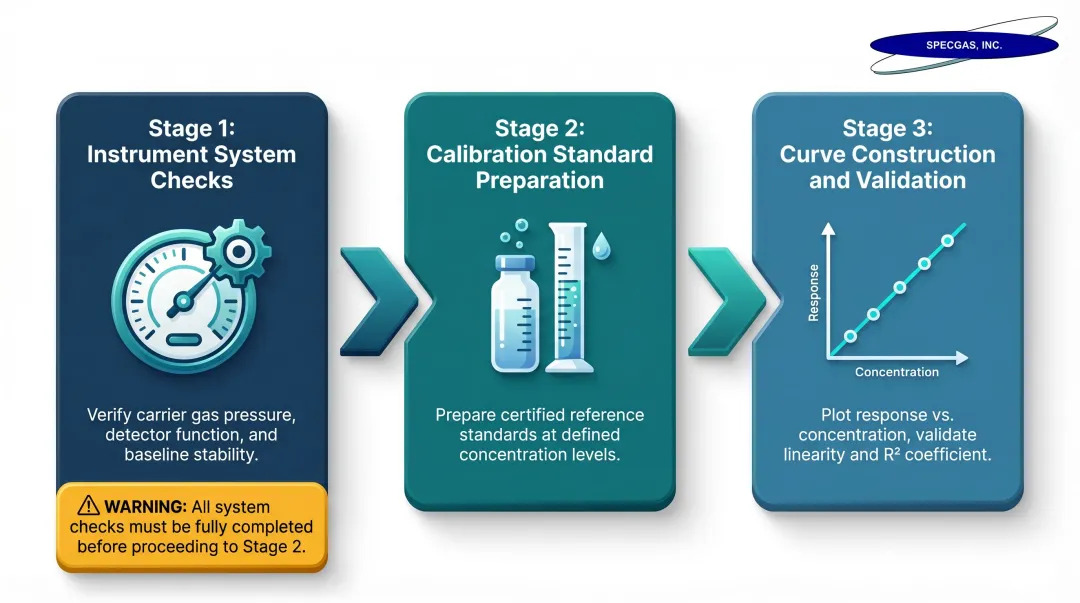

GC calibration follows three sequential stages: verifying instrument parameters, preparing and running reference standards, then constructing the calibration curve. Each stage must be completed in order—skipping instrument verification before running standards is one of the most common sources of avoidable calibration error.

Step 1: Instrument System Checks

Three core instrument parameters must be verified before analytical calibration:

Column Oven Temperature Accuracy:

- Set at minimum three temperature points across operating range (e.g., 40°C to 300°C)

- Verified using a calibrated external temperature probe

- Agilent's Operational Qualification protocols specify accuracy tolerance of ±1.0% of the setpoint in Kelvin

- Acceptance criterion: ±2°C from setpoint

Carrier Gas Flow Rate:

- Verified using a calibrated flow meter at setpoint values across the operating range

- Agilent specifications for FID flow accuracy: ±40.0 mL/min for Air, ±3.0 mL/min for Hydrogen, ±2.5 mL/min for Make-up gas

- Acceptance criterion: Observed flow within ±10% of set value

Detector Performance:

- Precision: Injection repeatability measured as %RSD (Relative Standard Deviation)

- Linearity: Correlation coefficient NLT (Not Less Than) 0.99 using multi-level standards

- Dynamic Range: Verified across expected analytical range

Step 2: Calibration Standard Preparation

Analysts prepare reference standards at five or more known concentration levels spanning the expected sample range to enable calibration curve construction. The accuracy of the entire calibration depends on the verified concentration and stability of these standards—a degraded or imprecisely blended standard propagates error through every result calculated from that curve.

NIST-traceable certified calibration gas standards eliminate this uncertainty by guaranteeing reference concentrations remain accurate and stable throughout the cylinder's shelf life. For reactive gas mixtures, that stability guarantee is especially difficult to achieve. SpecGas Inc. addresses this through gravimetric blending and a proprietary internal cylinder treatment process that maintains stability in reactive mixtures including H₂S, Chlorine, HCN, and Formaldehyde—at concentration levels from 300 PPB to 10%.

Step 3: Running Standards and Constructing the Calibration Curve

The calibration curve construction process:

- Inject standards in replicate (typically triplicate)

- Record detector response (peak area is standard in modern systems)

- Plot response against known concentration or mass

- Use least-squares linear regression to establish the calibration function

Calibration curve validity depends on:

- Correlation coefficient (typically R² ≥ 0.99)

- Concentration range that brackets expected sample concentrations

- Sufficient data points (minimum five levels per ICH Q2(R1))

The Five GC Calibration Methods Explained

The choice of calibration method depends on analytical goals, sample matrix complexity, regulatory requirements, and acceptable levels of uncertainty.

Area Percent Normalization

This method equates a peak's area percent directly to its mass or concentration percent in the sample. It's the simplest approach but relies on critical assumptions:

- All analytes are detected

- Peaks are pure (no co-elution)

- Detector response is equal for all compounds

USP <621> allows this for impurity profiling at investigational thresholds, but warns that these assumptions often don't hold for accurate quantitation. It's often output by GC data systems by default, without anyone confirming the underlying assumptions hold.

Area Percent Normalization with Response Factors

Because FID does not respond equally to all compounds, raw area percent values can misrepresent actual concentrations. This approach corrects for that by applying detector response factors—ratios that account for different signal intensities per unit mass.

Corrected area percent = raw peak area × compound-specific response factor. Use this method over pure area normalization whenever FID response variability across compounds is a factor.

External Standard Calibration

A calibration curve is generated from standards run separately from samples, with peak area plotted against known concentration. USP <621> describes preparing standard solutions across a linear range and using linear regression to establish the calibration function.

One limitation: it doesn't account for variability introduced during sample preparation or injection. Most reliable when extraction is simple and injection reproducibility is high.

Internal Standard Calibration

A known concentration of a compound (the internal standard) is added to both samples and standards before preparation. The ratio of analyte peak area to internal standard peak area is plotted against concentration.

This corrects for variability in extraction recovery and injection volume, making it more precise than external standard when sample handling is complex. EPA Method 8260C mandates internal standard calibration for GC/MS VOC analysis.

Internal Standard Selection Criteria:

- No interference with sample components

- Similar chromatographic behavior to analytes

- High purity and availability

- No co-elution with target analytes

Standard Addition

This method involves running samples first, then adding known increments of the analyte directly to the sample and re-running. Analyte concentration is determined by extrapolating to the x-axis intercept.

Most useful when:

- Sample matrix is too complex for straightforward internal standard

- Matrix effects significantly impact detector response

- Matched-matrix calibration is impractical or unavailable

Peer-reviewed research confirms its use in GC-MS/MS to compensate for matrix-induced enhancement or suppression in food commodities.

Key Factors That Affect GC Calibration Accuracy

Calibration Gas/Standard Quality and NIST Traceability

The accuracy of any GC calibration is bounded by the accuracy of the reference standards used. An impure or inaccurately blended calibration gas introduces systematic error that cannot be corrected downstream.

NIST Special Publication 260-126 defines a NIST Traceable Reference Material (NTRM) as a reference material produced by a commercial supplier with a well-defined traceability linkage to NIST. All sources of uncertainty must be included in the final uncertainty statement, and certification periods vary (e.g., 8 years for non-reactive mixtures, 2-4 years for reactive gases per NIST SP 260-222).

Reactive gas mixtures present particular stability challenges—they can degrade over time in cylinders that haven't been treated appropriately. Proprietary internal cylinder treatment processes — such as those used by specialty gas suppliers like SpecGas Inc. — can significantly extend the usable shelf life of reactive mixtures containing gases like formaldehyde, nitric oxide, H₂S, and chlorine.

Carrier Gas Flow Rate Stability and Purity

Carrier gas flow rate directly affects retention time and peak area reproducibility. Fluctuations in flow rate between standard runs and sample runs introduce systematic error into calibration curves.

High-purity carrier gas (helium, hydrogen, or nitrogen) is required—Agilent recommends 99.9995% purity or better. Key purity requirements include:

- Hydrocarbon traps to prevent interference with FID baselines

- Moisture traps to protect column stationary phase

- Oxygen traps to prevent detector and column degradation

Column Oven Temperature Accuracy and Detector Operating Conditions

Temperature affects analyte partitioning in the column and therefore retention time and peak resolution. If oven temperature deviates from the set point used during calibration standard runs, both retention time and peak area will shift—causing calibration curve errors or identification failures.

Detector Linear Dynamic Ranges:

| Detector Type | Linear Dynamic Range | Typical Analytes |

|---|---|---|

| Flame Ionization Detector (FID) | >10⁷ (±10%) | Most organic compounds |

| Thermal Conductivity Detector (TCD) | 10⁵ (±5% to ±10%) | Permanent gases, light hydrocarbons |

| Micro-Electron Capture Detector (µECD) | 5 × 10⁴ | Halogenated organics, electrophilic compounds |

Exceeding the linear dynamic range invalidates the calibration curve — detector response is no longer proportional to analyte concentration, which directly connects to how you establish your calibration range.

Calibration Range and Linearity

Calibration curves are only valid within the concentration range over which they were established. Extrapolating beyond the validated range is a common error. Best practice calls for at least five concentration levels that bracket the expected sample range, keeping all points within the detector's confirmed linear region.

LCGC warns that relying solely on the correlation coefficient to validate a calibration curve is insufficient. Unweighted linear regression can produce large relative errors at the low end of the curve — errors that a high correlation coefficient will not flag, allowing a flawed calibration to pass standard method criteria.

When to Calibrate and Common Mistakes to Avoid

Calibration Frequency

The frequency of each calibration parameter depends on what is being verified:

Monthly verification:

- Carrier gas flow rate accuracy

Every six months or after major maintenance:

- Detector linearity

- Auto-injector performance

- Column oven temperature accuracy

Every 12 hours (EPA Method 8260C):

- Continuing Calibration Verification (CCV) standard

Regulatory environments (e.g., GMP pharma labs) may impose more stringent requirements. ISO/IEC 17025 requires equipment calibration when measurement accuracy or uncertainty affects reported results validity.

Triggers for Recalibration

Immediate recalibration is required outside the standard schedule when:

- Hardware replacement or repair occurs

- Significant operating condition changes (column swap, detector change)

- Instrument relocation

- Out-of-calibration findings during system suitability checks

- Extended periods of non-use

Knowing when to calibrate keeps your schedule on track — but how you calibrate determines whether results are defensible. These are the mistakes that most often undermine otherwise sound calibration programs.

Common Mistakes

Using insufficient calibration points — Fewer than 5 points or a range that doesn't bracket sample concentration leads to unreliable regression.

Failing to verify standard purity and stability — Standards that have degraded or are outside their certified use date introduce reference error that cannot be identified from calibration data alone. NIST-traceable standards with documented stability — such as SpecGas's proprietary treated cylinders for reactive gases — prevent that reference error from carrying silently into reported results.

Conflating system suitability with calibration — Running a single standard point to confirm retention time and peak area is system suitability, not calibration. A full calibration curve must be constructed and validated against linearity acceptance criteria.

Applying area percent normalization by default — Data systems frequently apply area percent as the default output, but this method assumes all analytes are detected, peaks are pure, and detector response is uniform. Reporting area percent without validating those assumptions can produce analytically invalid quantitative data.

Frequently Asked Questions

How often should you calibrate a gas chromatograph?

Carrier gas flow accuracy is typically verified monthly, while detector linearity, column oven temperature, and auto-injector performance are calibrated every six months or following major repair or maintenance events. Regulatory or method-specific requirements may mandate additional frequency.

What is used for GC calibration?

GC calibration uses certified reference standards at known concentrations, typically NIST-traceable calibration gas standards for gas-phase analysis or certified liquid standard solutions. Instrument parameter verification requires calibrated instruments such as temperature probes and flow meters.

What are the 5 points of calibration in GC?

"5 points" refers to running five concentration levels of a reference standard to build a statistically valid calibration curve, with each injection spanning a different known concentration across the expected sample range. Both ICH Q2(R1) and EPA Method 8260C specify this as the minimum.

Why is a calibration factor needed?

A calibration factor (or response factor) corrects for the fact that different analytes produce different detector signals per unit concentration. Without this correction, area percent values would misrepresent the actual composition of a sample, particularly when using detectors like FID that do not respond equally to all compound types.

What is the difference between a calibration factor and a response factor?

According to Arizona Department of Health Services guidance, a Response Factor (RF) describes a detector's signal output relative to the amount of a specific compound. A Calibration Factor (CF) is a correction coefficient derived from comparing that response to a known standard. In practice, response factors are used within calibration calculations to normalize or correct raw detector signal.

What are the four types of calibration in GC?

The four most commonly cited GC calibration methods are external standard, internal standard, standard addition, and normalization. Each differs in how it accounts for detector response variability and sample matrix effects; a fifth method, area percent with response factors, is also widely used in practice.