Introduction

Primary standard gas flow calibration measures gas flow rate directly from fundamental physical quantities—mass, volume, time, pressure, and temperature—without reference to another flow standard. This establishes the highest level of accuracy for verifying and correcting flow meters under test.

This guide is essential for calibration engineers, lab managers, and quality professionals in emissions monitoring, semiconductor manufacturing, air quality testing, and research. In these fields, an uncalibrated or drifting flow meter carries real consequences: data integrity failures, regulatory penalties ranging from $5,000 to $25,000 for inaccurate greenhouse gas reporting, or product contamination that costs far more.

This guide covers how primary standard calibration works, the three main methods (PVTt, gravimetric, and piston prover), best practices for achieving uncertainty below 0.1%, and common program management mistakes—including the critical role that NIST-traceable calibration gas quality plays throughout the process.

Key Takeaways

- Primary standard calibration measures flow directly from first principles (mass, volume, time)—no reference meter required, placing it at the top of the traceability chain

- Three core methods: PVTt (pressure-volume-temperature-time), gravimetric (direct mass measurement), and piston/bell prover (positive displacement)

- Uncertified calibration gas introduces density errors that propagate directly into flow calculations, undermining NIST traceability

- Key best practices: achieve steady-state flow before measuring, eliminate all leaks, and match gas composition to actual operating conditions

- Secondary standards with documented traceability are sufficient for many process monitoring applications—primary calibration isn't always the right tool

What Is Primary Standard Gas Flow Calibration?

Primary standard gas flow calibration directly realizes the measurement of mass flow (or volumetric flow) from SI base units—length, mass, time, temperature, and pressure—without calibration against another flow reference. The result is a flow measurement with known, documented uncertainty traceable to a national metrology body like NIST.

The process generates a calibration factor or correction curve for the meter under test (MUT), confirming accurate readings within its specified flow range or identifying systematic errors that need correction. Expanded uncertainties typically range from 0.02% to 0.13% at k=2 (approximately 95% confidence), depending on flow range and method.

This distinction matters when choosing between primary and secondary standards. A working standard (secondary standard) is a calibrated flow meter—such as a critical flow venturi or laminar flow element—used to calibrate other meters. It has been calibrated against a primary standard but does not independently derive flow from first principles. Primary calibration offers the lowest available uncertainty; secondary standards trade some accuracy for operational convenience and faster turnaround.

Why Industries Rely on Primary Standard Gas Flow Calibration

The Traceability Imperative

In regulated industries—emissions monitoring, semiconductor fabrication, pharmaceutical manufacturing, energy utilities—calibration results must withstand audits, regulatory submissions, and quality certifications like ISO/IEC 17025:2017. Primary standard calibration provides the unbroken traceability chain upon which all secondary methods depend.

Consequences of Inadequate Calibration

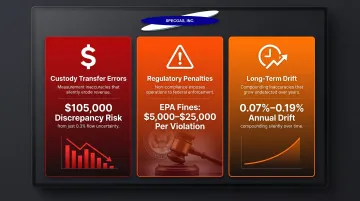

Inaccurate gas flow measurement drives significant financial and compliance exposure:

- Custody transfer errors: A mere 0.3% combined uncertainty can introduce a $105,000 discrepancy in a 700,000-barrel equivalent transaction

- Regulatory penalties: Greenhouse gas reporting errors expose operators to fines of $5,000–$25,000 per violation under applicable regulations

- Long-term drift: NIST historical data shows that critical flow nozzles drift with a standard deviation of 0.07% per year, while laminar flowmeters exhibit 0.19% per year drift—deviations that compound over time and compromise process control

The Critical Role of Calibration Gas Quality

The calibration gas flowing through the system during testing must have certified, known composition and purity. PVTt and gravimetric methods calculate density from molecular weight and compressibility factor—errors in gas composition propagate directly into flow calculations.

For example, NIST's PVTt systems use dry air with a molecular weight relative standard uncertainty of 190 × 10⁻⁶, primarily driven by water vapor variability. Maintaining humidity below 3% is critical to controlling this uncertainty.

That level of compositional precision places real demands on the gas supplier. Certified suppliers like SpecGas Inc. address this by producing calibration gas mixtures via gravimetric blending with documented cylinder treatment processes that preserve reactive gas stability. Each mixture carries direct NIST traceability, so the composition uncertainty entering your flow calculation is quantified and controlled—not assumed.

When Primary Standard Calibration Is Required

- Mass flow controllers (MFCs) in semiconductor and emissions monitoring: typically an industry requirement

- General industrial flow monitoring: best practice but not always mandated

- Performance gap closed: primary calibration quantifies drift to within 0.02%–0.1% uncertainty, detecting small but consequential errors that secondary methods miss

How Primary Standard Gas Flow Calibration Works

Conceptual Framework

All primary gas flow standards measure flow by tracking the accumulation (or depletion) of gas mass or volume over a precisely measured time interval. The ratio of accumulated mass (or volume corrected to reference conditions) to elapsed time gives the true flow rate, which is compared to the MUT's output.

Key Principle: Conservation of mass governs calibration accuracy. The flow measured by the primary standard must account for all gas—including volume stored in connecting lines, leaks, and temperature or pressure changes during measurement. Leak testing the system before calibration runs is non-negotiable.

Step 1: Setup and Stabilization

Install the MUT in the calibration rig and bring the gas supply to stable operating conditions (target pressure, temperature, and flow rate). Evacuate or pre-condition the collection volume as required and verify leak-tightness of the entire system.

Critical requirement: Calibration must not begin until steady-state flow conditions are confirmed. Unsteady flow introduces uncertainty that no post-processing can fully correct.

Step 2: Primary Standard Flow Measurement

Three primary methods are in use, each suited to different flow ranges and accuracy requirements:

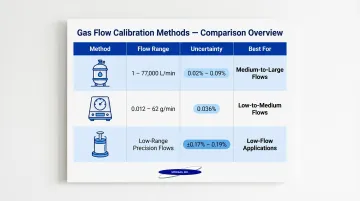

1. PVTt (Pressure-Volume-Temperature-time)

Gas is diverted into a collection tank of known volume. Final and initial gas densities are calculated from pressure and temperature measurements to determine accumulated mass.

- Flow range: 1 to 77,000 L/min (NIST operates 34 L, 677 L, and 26 m³ systems)

- Uncertainty: 0.02% to 0.09% (k=2), depending on system size and gas

- Best for: medium-to-large flow ranges

Inventory Mass Cancellation: NIST's PVTt systems match pre-filling and post-filling thermodynamic conditions in the connecting (inventory) volume. This cancels correlated sensor errors, substantially reducing uncertainty from pressure and temperature fluctuations in connecting lines.

2. Gravimetric

The collection cylinder is weighed before and after gas flows through the MUT. Change in mass divided by elapsed time gives mass flow.

- Flow range: 0.012 to 62 g/min (typical for low-flow systems)

- Uncertainty: 0.036% (k=2) for advanced dynamic gravimetric systems

- Best for: low-to-medium flow ranges requiring very low uncertainty

Dynamic gravimetric systems weigh the tank continuously while gas flows, eliminating complex diverter valves and buoyancy corrections.

3. Piston/Bell Prover

A piston or inverted bell of known cross-sectional area moves at measured velocity to displace a known volume of gas at constant pressure.

- Flow range: 3.7×10⁻⁵ to 1.44 m³/min (depending on prover size)

- Uncertainty: ±0.17% to ±0.19% (k=2)

- Best for: low-flow precision applications

NIST protocols require that leaks exceeding 0.010% of minimum measured flow be identified and corrected.

Step 3: Comparison, Correction, and Documentation

Compare the primary standard's measured flow to the MUT's indicated flow at multiple set points—typically 5 flow rates, measured in both increasing and decreasing order for reproducibility.

Calculate the calibration factor or deviation curve, then document the following for each run:

- Gas type and certified composition

- Temperature, pressure, and humidity

- Date and calibration interval

- Instrumentation used and their calibration status

NIST calibration reports typically present results in dimensionless format (for example, discharge coefficient vs. Reynolds number) to allow accurate application across varying process conditions.

Best Practices for Primary Standard Gas Flow Calibration

Best Practice 1: Match Standard Accuracy to Measurement Need

The calibration standard must be significantly more accurate than the MUT. The generally accepted rule is a 4:1 accuracy ratio—the standard's uncertainty should be no more than one-quarter of the acceptable MUT uncertainty.

The legacy 4:1 Test Uncertainty Ratio (TUR) from MIL-STD-45662A is no longer a strict mandate under ISO/IEC 17025:2017 or ANSI/NCSL Z540.3. Modern standards emphasize risk-informed decision rules with a Probability of False Accept (PFA) ≤ 2% using Root-Sum-Square (RSS) uncertainty calculations and k=2 coverage factors. If estimating PFA is not practicable, a TUR ≥4:1 remains acceptable as a fallback.

Best Practice 2: Verify NIST Traceability at Every Level

Traceability applies not only to the flow standard and instrumentation, but to the calibration gas itself. Using a gas mixture with uncertified or poorly documented composition introduces density and compressibility errors that propagate directly into flow calculations.

Calibration programs should source gas blends—including reactive specialty gas mixtures—from suppliers who provide NIST-traceable certificates of analysis.

SpecGas Inc. produces NIST-traceable calibration gases gravimetrically, with certified compositions from 300 PPB to 10% concentration levels—precise enough for accurate density calculations in both PVTt and gravimetric methods. Their proprietary cylinder treatment process extends shelf life for reactive gases like chlorine, H₂S, phosphine, arsine, HCN, and phosgene, keeping certified composition stable across extended calibration intervals.

Best Practice 3: Eliminate and Account for Connecting Volume Effects

Gas stored in piping between the MUT and the primary standard (the "inventory volume") changes in density as pressure and temperature fluctuate during a calibration run. Failing to account for this stored mass introduces systematic error.

Solutions:

- Advanced systems: Use inventory mass cancellation techniques (matching initial and final inventory densities)

- Simpler systems: Minimize connecting volume or explicitly correct for density changes

Best Practice 4: Calibrate Under Realistic Operating Conditions

The viscosity, density, and flow profile of the gas during calibration should match the gas used in the actual process application. Calibrating a mass flow controller with nitrogen and then running it on a reactive specialty gas without an appropriate correction factor introduces uncertainty.

Requirements:

- Specify gas composition, temperature, and pressure conditions on calibration certificates

- Note any limitations when used outside calibrated conditions

- Use calibration gases balanced in appropriate carrier gases (nitrogen, argon, helium, dry air) to match process applications

Matching thermodynamic properties to the target process requires supplier flexibility on carrier gas selection. SpecGas blends calibration gases balanced in nitrogen, zero air, and other inert carriers to customer specification—so the calibration gas reflects the actual process gas environment.

Best Practice 5: Document Everything and Maintain Calibration Intervals

Retain all calibration records. Drift trends across successive calibrations provide early warning of meter degradation or process changes.

Recalibration Intervals:

- Under ISO/IEC 17025:2017 (Clause 7.8.4.3), calibration labs cannot dictate recalibration intervals on certificates unless agreed with the customer—the burden rests on the equipment owner

- Major MFC vendors (e.g., Alicat, Brooks Instrument) typically recommend 12-month intervals as a baseline

- ILAC-G24 outlines methods—staircase adjustment, control charting, and in-use time tracking—to extend or shorten intervals based on risk and historical drift

Key Factors and Common Misconceptions

Key Factors Affecting Calibration Accuracy

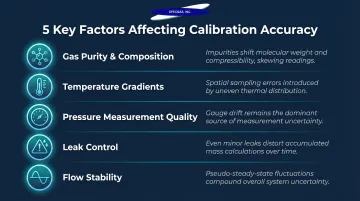

- Gas purity and composition uncertainty: Impurities shift molecular weight and compressibility, directly affecting density-based flow calculations

- Temperature gradients in collection tanks or connecting volumes create spatial sampling errors that dominate uncertainty budgets in high-accuracy systems

- Pressure measurement quality: Gauge drift and calibration residuals are among the largest controllable uncertainty sources in PVTt systems

- Even small leaks during collection distort accumulated mass measurements — sub-atmospheric systems are most vulnerable

- Flow stability: Pseudo-steady-state fluctuations from imperfect pressure regulators contribute uncertainty that compounds with shorter collection times

These factors help explain why two common misconceptions about calibration persist — both of which can lead to either under-investing in accuracy or over-spending on it.

Common Misconception 1: "NIST Traceable" and "Primary Standard Calibrated" Are the Same Thing

NIST traceability means a calibration result can be linked to NIST standards through a documented chain. Primary standard calibration is the specific process used at the top of that chain. A working standard meter can be NIST-traceable without being a primary standard.

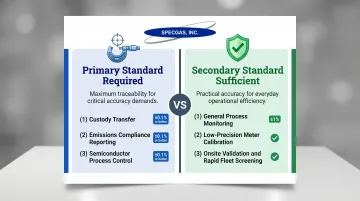

Common Misconception 2: Primary Standard Calibration Is Always Necessary—or Always Overkill

When primary standard calibration is essential:

- Custody transfer applications

- Emissions compliance reporting

- Semiconductor process control requiring ±0.1% accuracy or better

When secondary standards suffice:

- General process monitoring where ±1% accuracy is acceptable

- Meters with precision far below the cost of primary calibration

- Onsite validation needs or rapid screening of large meter fleets

Frequently Asked Questions

What is the ISO standard for flow meter calibration?

ISO 9300:2022 governs calibration using critical flow venturi nozzles, specifying geometry, installation, and operating conditions for mass flow determination. ISO/IEC 17025:2017 sets general competence requirements for calibration laboratories. Specific industries follow additional sector-specific standards—oil & gas under API MPMS Chapter 14, natural gas under AGA Reports No. 3, 7, 9, and 11.

What is the NIST standard for calibration?

NIST does not publish a single "calibration standard" document but provides calibration services, reference data, and traceability through its measurement programs. For gas flow, NIST's PVTt, Rate-of-Rise (SLowFlowS), and gravimetric primary standards serve as the national reference. NIST traceability means a calibration is linked to these references through a documented, unbroken chain of comparisons.

What is the difference between a primary standard and a secondary standard in gas flow calibration?

A primary standard derives flow directly from SI base units (mass, length, time) without reference to another flow standard. A secondary (working) standard is a calibrated flow meter used to calibrate other meters—its accuracy depends on its own calibration against a primary standard.

How often should gas flow meters be recalibrated?

Recalibration intervals depend on meter type, application criticality, operating environment, and manufacturer recommendations—commonly ranging from 6 months to 2 years, with 12-month intervals standard for mass flow controllers. ISO/IEC 17025-accredited labs and regulatory applications typically require documented calibration schedules with drift trend analysis.

What gases are used in primary standard gas flow calibration?

Primary standard calibrations are most commonly performed with dry air, nitrogen, argon, or helium because their thermodynamic properties are well-characterized in databases like NIST REFPROP (SRD 23). When calibration must reflect the actual process gas (for example, a reactive mixture), NIST-traceable calibration gas blends with certified composition are required.

Why does calibration gas composition matter for flow calibration accuracy?

In density-based flow measurement methods (PVTt, gravimetric), molecular weight and compressibility factor are used to calculate density—errors in gas composition directly translate into errors in calculated flow. Even small impurity levels introduce systematic errors, which is why certified, NIST-traceable calibration gas standards with documented uncertainty are critical to a valid calibration. SpecGas Inc. produces certified, NIST-traceable calibration gas standards with documented uncertainty specifically designed to meet these requirements.