Introduction

In any process that depends on gas composition—whether it's emissions monitoring, semiconductor fabrication, or air quality testing—the gap between "what's in the mixture" and "what you think is in the mixture" can mean the difference between accurate results and costly errors. The stakes show up in the data:

- Between 2010 and 2014, OSHA documented 9 worker fatalities at oil and gas sites where sudden exposure to hydrocarbon gases exceeding 100,000 ppm proved lethal during manual tank gauging

- In semiconductor manufacturing, gas monitoring failures can cost over 500 hours of tool recovery time annually across a fleet of 75 process tools, with each leading-edge wafer valued at $17,000

- When the EPA used real-time fenceline monitoring data to audit a petroleum refinery in 2025, benzene concentrations reached 290 µg/m³—more than 30 times the 9 µg/m³ action level—resulting in a $35 million settlement

The pattern is clear: when gases and vapors occur in complex multi-component mixtures, qualitative identification alone is not enough. Real-time quantitative analysis is required to measure exact concentrations as they change, enabling immediate corrective action before transient events escalate into regulatory violations, process failures, or safety incidents.

This article covers what real-time quantitative gas analysis is and why it's critical for complex mixtures. It also walks through which analytical techniques make it possible, how the process works step by step, and why calibration standard accuracy underpins everything.

TL;DR

- Real-time quantitative analysis measures exact concentrations of each component in a complex mixture as conditions shift

- Complex mixtures challenge analyzers with overlapping spectral signals, reactive components, and sub-ppm accuracy demands

- Leading techniques include mass spectrometry (GC-MS, quadrupole MS), infrared spectroscopy, and chemometric modeling such as Partial Least Squares Regression (PLSR)

- Accurate results depend on stable, NIST-traceable calibration gas standards — poor standards introduce errors that no analytical method can correct

What Is Real-Time Quantitative Analysis of Complex Gas and Vapor Mixtures?

Real-time quantitative gas analysis is the continuous, near-instantaneous measurement of both the identity and concentration of individual species within a multi-component gas or vapor mixture, without the delays associated with offline sampling and lab analysis. This distinguishes it from qualitative analysis, which only identifies what is present, and from batch or offline methods that introduce delays ranging from minutes to days.

What Makes a Mixture "Complex"

A mixture is considered complex when it contains multiple gases and vapors simultaneously, some with overlapping spectral or mass fingerprints. For example, in FTIR spectroscopy of vehicle exhaust, H₂O and CO₂ have overlapping absorbance bands with target pollutants like NO₂, NH₃, and aldehydes. Compounds with similar molecular weights create additional challenges in mass spectrometry when their fragmentation patterns overlap.

Real industrial and environmental samples are rarely clean two-component mixtures. Several factors drive this complexity:

- Reactive components that degrade over time, requiring stability-certified cylinder treatment to prevent concentration drift

- Concentration ranges spanning from percent levels down to parts-per-billion (ppb), demanding wide dynamic range from instrumentation

- Background interferents and variable matrix compositions that shift apparent concentrations of target species

- Overlapping fragmentation patterns in mass spectrometry when compounds share similar molecular weights

Application Areas

Real-time quantitative analysis is applied across numerous critical sectors:

- Emissions and air quality monitoring for regulatory compliance

- Semiconductor and electronics process control, where gas purity directly impacts yield

- Research laboratories requiring maximum precision

- Power generation facilities managing combustion efficiency

- Confined space safety programs protecting workers from toxic exposures

- Excimer laser gas systems maintaining optimal performance

- Calibration of industrial monitoring equipment

Why Real-Time Quantitative Analysis Is Critical in Gas Monitoring

Measurement Speed and Operational Risk

In applications like emissions compliance, process gas purity for semiconductor manufacturing, or safety monitoring in confined spaces, gas composition can change in seconds. Column-free quadrupole mass spectrometers operate with sampling times from nozzle to detector of less than 10 milliseconds, while FTIR systems provide detection at sub-1-second resolution. Delayed or batch-only analysis misses transient events that can cause regulatory violations, process failures, or safety incidents.

The EPA actively uses real-time fenceline monitoring data to trigger facility-wide audits. In one enforcement case, a refinery failed to implement timely corrective actions after benzene concentrations climbed steadily. The settlement required mandatory installation of real-time benzene fenceline monitors across the facility.

Continuous Emission Monitoring Systems (CEMS) tampering carries even steeper consequences. The Berkshire Power Plant incurred $8.25 million in combined criminal and civil penalties after management instructed staff to lower NOx readings by 0.5 ppm to evade Clean Air Act permit limits.

Quantitative Precision and Decision Quality

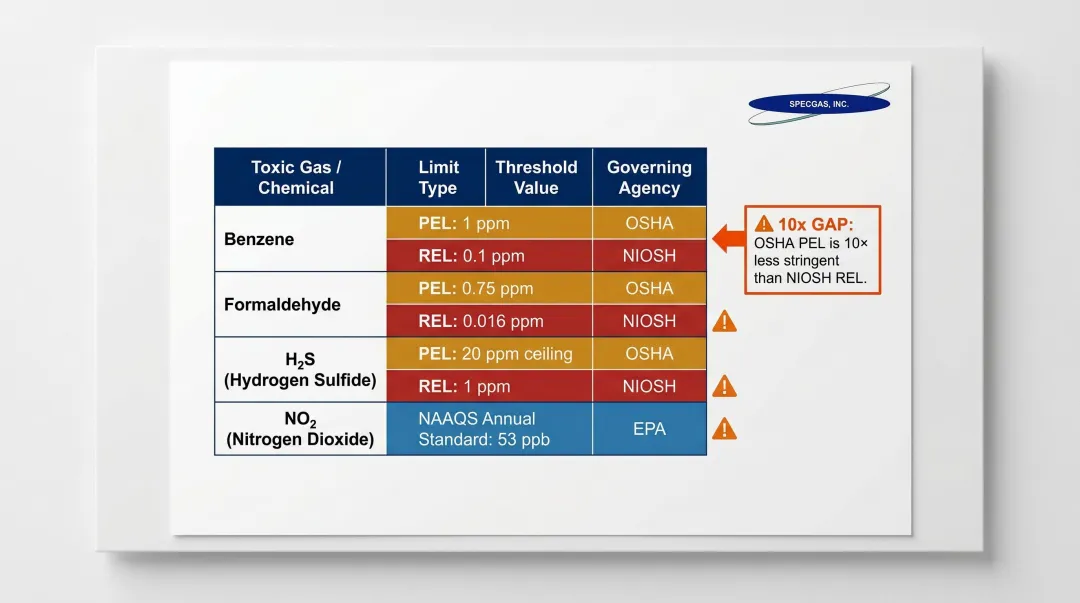

Speed alone isn't enough. Knowing that "some NOx is present" is not actionable. Regulatory and safety thresholds demand exact concentration measurements:

| Gas | Limit Type | Threshold | Agency |

|---|---|---|---|

| Benzene | 8-hr TWA PEL | 1 ppm | OSHA |

| Benzene | TWA REL | 0.1 ppm | NIOSH |

| Formaldehyde | 8-hr TWA PEL | 0.75 ppm | OSHA |

| Formaldehyde | TWA REL | 0.016 ppm | NIOSH |

| H₂S | 10-min Ceiling REL | 10 ppm | NIOSH |

| NO₂ | 1-hr NAAQS | 100 ppb | EPA |

The variance between OSHA Permissible Exposure Limits (PELs) and NIOSH Recommended Exposure Limits (RELs)—benzene at 1 ppm vs 0.1 ppm, formaldehyde at 0.75 ppm vs 0.016 ppm—means instruments must reliably quantify at the ppb level to satisfy the stricter of the two thresholds.

Core Operational and Business Benefits

Real-time quantitative analysis delivers measurable value:

- Enables immediate corrective action when compositions drift outside specification

- Supports continuous regulatory compliance documentation

- Reduces material waste and rework by detecting off-spec gas compositions early

- Improves safety outcomes in confined spaces and hazardous environments

- Provides the data foundation for process optimization and yield improvement

- Eliminates costly, time-delayed laboratory sampling

Key Analytical Techniques Used in Real-Time Quantitative Gas Mixture Analysis

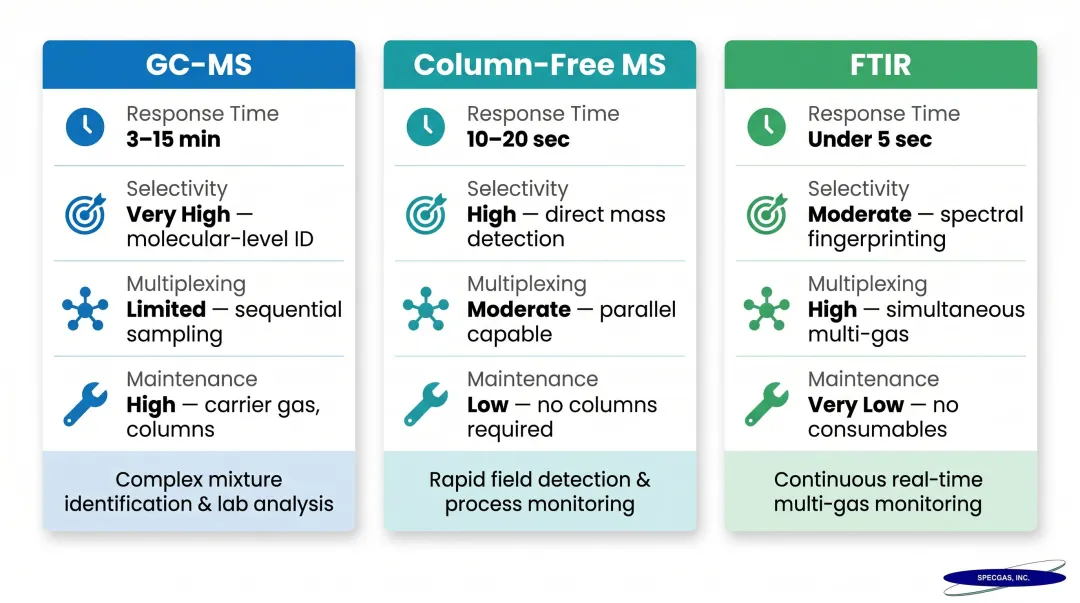

No single technique is universally best. The choice depends on the specific gas species, required detection limits, speed requirements, and whether a chromatographic separation step is feasible.

Quadrupole Mass Spectrometry and GC-MS

Quadrupole mass spectrometers separate ions by mass-to-charge ratio, enabling multi-component detection in seconds to minutes. Column-free QMS sensors can operate at pressures as high as 10–20 mTorr without differential pumping, making them suitable for semiconductor manufacturing applications like chemical vapor deposition (CVD) and plasma etching effluent analysis.

When gas species have overlapping mass fragmentation patterns—a common challenge in complex VOC mixtures—chemometric methods such as Partial Least Squares Regression (PLSR) are applied to deconvolute signals and extract quantitative concentration data for each component. In one study of CO/N₂O/CH₄ mixtures, PLSR predicted gas concentrations with calibration error up to 5 times better than standard multilinear regression, achieving Root Mean Square Error of Prediction (RMSEP) values of 18 ppm for N₂O and 17 ppm for CO—even when absorption features overlapped by more than 97%.

Trade-offs:

| Feature | GC-MS | Column-Free MS | FTIR |

|---|---|---|---|

| Response Time | 3-15 minutes | 10-20 seconds | <5 seconds |

| Selectivity | High (physical separation) | Moderate (mass-to-charge ratio) | Moderate to high (chemometric deconvolution) |

| Multiplexing | Requires multiple units | Up to 64 streams per instrument | Dozens of compounds simultaneously |

| Maintenance | High (carrier gases, columns) | Low (automatic calibration) | Low (stable background) |

GC-MS adds chromatographic separation, improving selectivity for overlapping species at the cost of longer cycle times. Column-free MS approaches combined with multivariate analysis can achieve real-time acquisition but require high-quality calibration datasets built on known reference blends.

Infrared and Near-Infrared (IR/NIR) Spectroscopy

That calibration dataset requirement applies equally to optical methods. IR and NIR spectroscopy detect molecular vibration signatures characteristic to each species. When combined with PLS chemometric modeling, these techniques simultaneously quantify multiple components at high accuracy without physical sample extraction.

In one application, NIR spectroscopy combined with PLS quantified methane, carbon dioxide, and water vapor in compressed natural gas systems at pressures up to 120 bar. The PLS models achieved R² values of 0.990 (CH₄), 0.993 (CO₂), and 0.947 (H₂O), with RMSEP values of 4.43%, 3.55%, and 8.35%, respectively.

FTIR systems sampling undiluted exhaust maintain heated sampling lines at 191 °C to prevent moisture condensation and loss of hydrophilic compounds like NH₃ and NO₂. Detection limits fall well below 1 ppm at 1-second resolution, making FTIR practical for continuous stack emissions monitoring where extractive sampling would alter the sample matrix.

Multivariate Statistical Modeling (Chemometrics)

For any spectral technique dealing with complex, overlapping mixtures, raw spectral data alone is insufficient. Chemometric models—Principal Component Analysis (PCA), Partial Least Squares Regression (PLSR), and Multivariate Curve Resolution-Alternating Least Squares (MCR-ALS)—map spectral patterns to known concentrations using calibration datasets.

Standard practices for infrared multivariate quantitative analysis are governed by ASTM E1655, which requires:

- Acquiring spectral data from samples

- Correlating spectral data with known chemical properties using statistical methods like PLSR

- Validating model performance by comparing predicted results with actual measurements

Raw spectral data must be preprocessed before modeling to reduce noise and baseline drift. Standard preprocessing steps include:

- Mean-centering and scaling to unit standard deviation (autoscaling)

- First Savitzky–Golay derivative with smoothing

- Orthogonal signal correction (OSC)

Critical principle: The quality of the calibration dataset directly determines the accuracy of the final model. Reference gas blends used for calibration must have certified, NIST-traceable concentrations and verified stability—particularly for reactive species like H₂S, NH₃, or NO₂ that can degrade or adsorb onto cylinder walls between preparation and use. A model built on drifted or inaccurately prepared calibration gases produces biased concentration predictions from the first measurement.

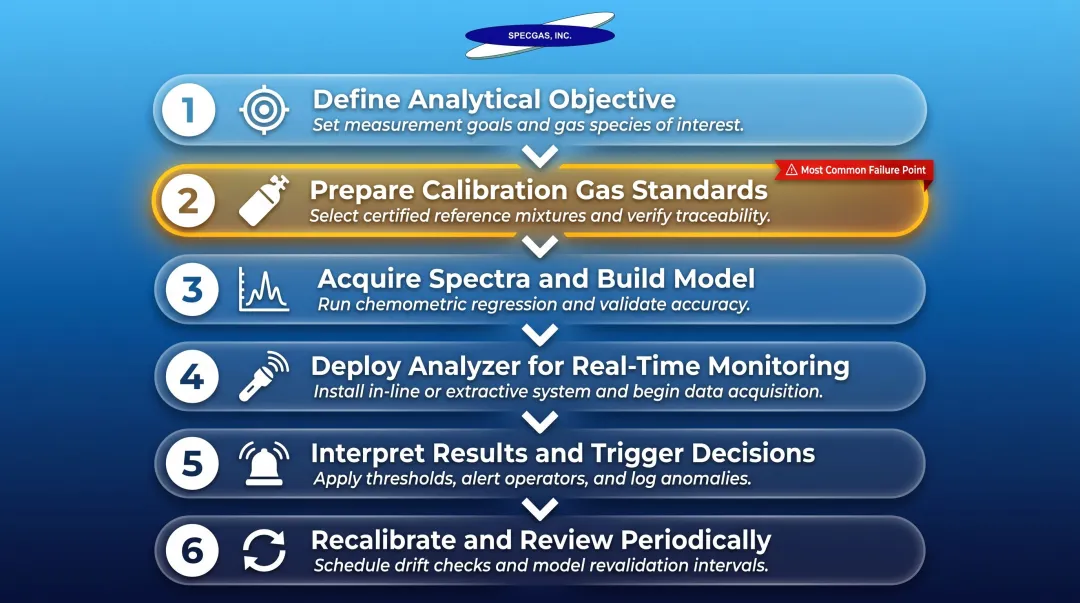

How Real-Time Quantitative Gas Analysis Works – Step by Step

This workflow breaks the analysis process into practical, real-world stages. Practitioners often underestimate the importance of the front-end stages—objective definition and calibration dataset preparation—and rush to data interpretation, which undermines the reliability of final results.

The single most common failure point: Building a predictive model on calibration gases whose composition is uncertain, unstable, or not NIST-traceable produces a model that appears mathematically sound but yields systematically biased concentration predictions in practice.

Step 1 – Define the Analytical Objective

Identify which species must be quantified, at what concentration range (ppm, ppb, percent), at what response time, and in what matrix background. Specify the accuracy requirements and regulatory or quality thresholds that results must meet.

This step determines:

- Which analytical technique is appropriate

- What calibration gas compositions are needed

- Selectivity requirements

- Detection limit targets

- Regulatory compliance thresholds

Getting these parameters wrong at the start forces costly rework at every downstream step.

Step 2 – Select and Prepare Reference Calibration Gas Standards

Prepare or procure certified calibration gas mixtures that span the full expected concentration range for each target species, including multi-component mixtures that reflect real-world sample compositions (including potential interferents). All reference standards should be NIST-traceable with documented uncertainty.

Reactive gas species require particular attention. For H₂S, NO, HF, formaldehyde, HCl, and chlorinated compounds, stability-certified cylinder treatment is essential to prevent concentration drift between preparation and use.

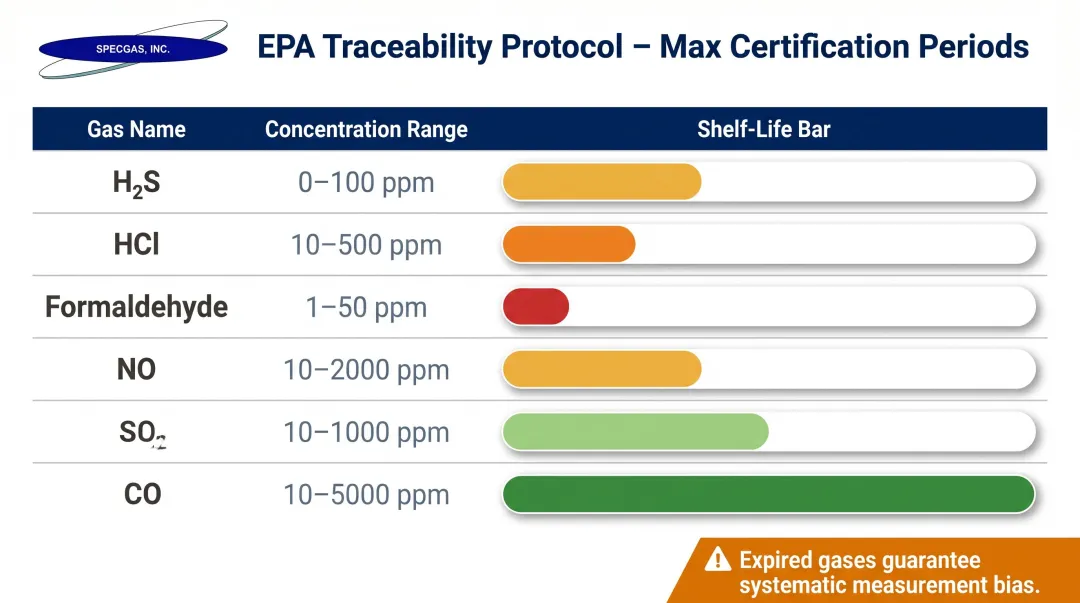

The EPA Traceability Protocol (EPA-600/R-12/531) establishes strict maximum certification periods for calibration standards stored in passivated aluminum cylinders:

| Gas Component | Concentration Range | Maximum Certification Period |

|---|---|---|

| H₂S | 1 to 1000 ppm | 3 years |

| HCl | 10 to 5000 ppm | 2 years |

| Formaldehyde | 0.5 to 10 ppm | 1 year |

| NO | 0.5 to 50 ppm | 3 years |

| SO₂ | 1 to 50 ppm | 4 years |

| CO | 1 ppm to 15% | 8 years |

Using expired calibration gases, or gases in unpassivated cylinders, guarantees systematic measurement bias, leading to failed RATA audits and potential regulatory fines.

Metrics impacted: Calibration accuracy, model robustness, traceability to recognized standards.

Step 3 – Acquire Calibration Spectra and Build the Predictive Model

Use the reference standards to collect spectral or mass spectra data under controlled conditions. Apply preprocessing steps—alignment, normalization, autoscaling—and then train a chemometric model (e.g., PLSR) on the calibration dataset.

Validate the model using test spectra from known concentrations not included in training. Report model accuracy metrics such as R² and RMSEP (Root Mean Square Error of Prediction). PLSR can successfully retrieve single-component concentrations even when absorption features overlap by more than 97%, but only when trained on high-quality calibration data.

Field evidence: An FTIR-based CO₂ impurity monitoring study found that operator-dependent selection of absorption regions for SO₂ and CO produced concentration variability exceeding 92.6% at low impurity levels. Standardizing the absorption region selection protocol eliminated the bias — the calibration data quality was never the problem; the procedure was.

Metrics impacted: Prediction accuracy, model generalizability, confidence in real-time outputs.

Step 4 – Deploy the Analyzer for Real-Time Monitoring

Connect the calibrated instrument to the sample stream or environment. The system continuously acquires spectra or mass signals and applies the validated model to calculate real-time concentration outputs for each species.

Maintain stable inlet conditions and monitor for instrument drift. Under EPA 40 CFR Part 60 Appendix F (Quality Assurance Procedures for CEMS), facilities must perform daily calibration drift (CD) checks at zero and high-level concentrations.

Metrics impacted: Response time, throughput, continuous coverage.

Step 5 – Interpret Results and Trigger Decisions

Translate concentration outputs into specific actions: alarms when thresholds are crossed, process adjustments, compliance log entries, or safety interventions. Do not act on outputs outside the concentration range covered by the calibration dataset — the model has no validated basis for extrapolation beyond that boundary.

Metrics impacted: Decision confidence, false alarm rate, response latency.

Step 6 – Recalibrate and Review the Model Periodically

Over time, instrument drift, changes in sample matrix composition, or introduction of new interferent species can degrade model accuracy. Establish a recalibration schedule using fresh reference standards, and update the calibration model when sample conditions change materially.

On the regulatory side, EPA requires quarterly accuracy assessments — Cylinder Gas Audit (CGA), Relative Accuracy Audit (RAA), or Relative Accuracy Test Audit (RATA). These audits will expose drift that routine daily checks miss.

One operational variable that often goes unaddressed: calibration gas shelf life. Reactive mixtures that have degraded will produce biased recalibration results even when all other procedures are correct. Do not use a certified standard once cylinder pressure falls below 100 psig (0.7 MPa) — NIST has documented measurable concentration changes below this threshold.

Metrics impacted: Long-term accuracy, compliance auditability, operational confidence.

The Critical Role of Calibration Gas Standards in Real-Time Quantitative Analysis

Real-time quantitative analysis is only as accurate as the reference data it is calibrated against. Every technique described—mass spectrometry, NIR spectroscopy, chemometric modeling—requires a training or calibration dataset built from gases of known, certified composition. If the reference standard is uncertain, unstable, or imprecisely blended, that error propagates directly into every measurement the system makes in the field.

Specific Challenges in Calibration Gas Preparation

Preparing stable multi-component standards at low ppm or ppb concentrations presents significant technical challenges. Reactive species like H₂S, NO, HF, formaldehyde, HCl, and chlorinated compounds are prone to adsorption onto cylinder walls, causing concentration drift over time. To mitigate adsorption, internal cylinder surfaces must be treated with coatings such as SilcoNert 2000, Sulfinert, or Performax to maintain stability of trace-level reactive gases.

Achieving NIST-traceable accuracy across all components simultaneously requires gravimetric blending with documented uncertainty analysis at each step.

On the regulatory side, the EPA requires calibration gases used for auditing ambient air quality analyzers and CEMS to be traceable to NIST reference standards. EPA Protocol Gases must be certified to an analytical uncertainty (95% confidence interval) of no more than ±2.0% of the certified concentration.

For most labs, sourcing from a specialty gas supplier with certified reactive-mixture expertise is more reliable—and more defensible—than attempting in-house preparation.

SpecGas Inc.'s Approach to Calibration Gas Stability

SpecGas Inc.'s proprietary internal cylinder treatment process and blending techniques are engineered specifically to address reactive gas stability. The company produces NIST-traceable multi-component calibration standards with documented shelf life, so the gases used to build and maintain a real-time analysis model stay at certified composition throughout their service life.

Founder Alfred Boehm began his career in 1976 at Messer Griesheim Industries in Germany, advancing to director-level positions in specialty gas R&D before transferring to the US in 1991 to continue that work. He founded SpecGas in 2001, bringing that background in reactive gas handling directly into the company's blending and cylinder treatment processes.

SpecGas produces custom multi-component blends tailored to the exact species and concentration ranges required for a given monitoring application, including:

- Low ppm and ppb standards for FTIR analyzers and gas chromatography systems

- Moisture analyzer calibration mixtures at precisely controlled concentration levels

- Complex reactive blends (H₂S, HCl, HF, formaldehyde) with documented shelf life

- All mixtures produced gravimetrically with traceable uncertainty budgets

Direct Impact on Real-Time Analysis Performance

Stable, accurately certified calibration gases reduce model prediction error, support regulatory defensibility of monitoring data, and eliminate a critical source of systematic bias from the measurement chain. When reference standards drift or carry uncertified uncertainty, the cost shows up in failed audits, model retraining, and regulatory challenges—all of which are avoidable with properly certified calibration gases from the start.

How SpecGas Inc. Can Help

SpecGas Inc. supplies precision calibration gases to laboratories, monitoring operations, and industrial facilities running real-time gas analysis. Founded by research chemist Alfred Boehm — whose specialty gas career spans back to 1976 — SpecGas brings proprietary blending and cylinder treatment processes that few suppliers can replicate.

Specific Capabilities:

- Custom multi-component calibration blends at ppm and ppb concentrations, formulated to match the exact species and matrix of your monitoring application

- NIST-traceable gas standards with documented accuracy for defensible, auditable analysis programs

- Proprietary stability guarantee for reactive gas mixtures, extending shelf life and protecting calibration quality over time

- Lead times that outpace most specialty gas suppliers, with rush service available when schedules are tight

Even the most capable real-time analyzer drifts without traceable, stable calibration gases behind it. Contact SpecGas at (215) 443-2600 or website-inquiries@specgasinc.com to discuss your mixture requirements.

Conclusion

Real-time quantitative analysis of complex gas and vapor mixtures is a technically demanding discipline that connects the right analytical technique, a validated chemometric model, and above all, a trustworthy calibration dataset. Each layer depends on the one below it, which means measurement quality starts with the calibration gas.

That foundation requires ongoing attention — not a one-time setup. Instruments drift, sample matrices change, and reactive calibration gases degrade in ways that standard cylinders may not handle well. Regular recalibration against certified, stable reference standards maintains the accuracy and defensibility of real-time monitoring data over time.

Suppliers like SpecGas Inc. address this directly through proprietary internal cylinder treatment processes specifically developed for reactive gas stability, paired with NIST-traceable blending that gives labs and monitoring programs a verifiable, consistent baseline. Organizations that build their calibration programs around that kind of rigor — sourcing from suppliers who can demonstrate it, not just claim it — are far better positioned to meet regulatory requirements, protect worker safety, and keep process control data defensible when it matters most.

Frequently Asked Questions

What is the difference between qualitative and quantitative gas analysis?

Qualitative analysis identifies which gases are present in a mixture (such as retention times in chromatography), while quantitative analysis determines the exact concentration of each species. Real-time quantitative analysis does both continuously — essential for process control, compliance monitoring, and safety applications where concentration thresholds matter.

What analytical techniques are best suited for complex multi-component gas mixtures?

The leading approaches include:

- Quadrupole mass spectrometry (with or without GC separation)

- Infrared and near-infrared spectroscopy

- Multivariate chemometric modeling (such as PLSR)

The right choice depends on the specific species, detection limits, and required response time. Chemometric methods are often necessary when components have overlapping spectral or mass signatures.

How do overlapping spectral signals affect quantitative accuracy in gas mixtures?

When two or more components produce similar spectral or mass fragmentation patterns, simple peak-reading methods cannot distinguish their contributions. Chemometric models trained on calibration datasets that include all relevant species can mathematically deconvolute the overlapping signals and accurately predict each component's concentration.

How does the quality of calibration gas affect real-time analyzer performance?

Calibration gas quality directly determines model accuracy. If the reference standard has uncertain composition, unstable concentrations, or reactive species that have degraded, every measurement the system produces will carry that error. NIST-traceable, stability-certified calibration gases — with documented shelf-life guarantees for reactive species — ensure measurements remain defensible and accurate throughout the cylinder's service life.

How often should calibration gases be replaced for continuous monitoring systems?

Replacement frequency depends on the gas species, cylinder treatment quality, and the accuracy requirements of the application. Reactive gases degrade faster, so manufacturers' certified shelf-life data should be followed closely: formaldehyde expires after 1 year, HCl after 2 years, and H₂S after 3 years. Recalibration should also be triggered after any significant change in sample matrix composition or instrument service.

What is NIST traceability and why does it matter for gas analysis?

NIST traceability means a gas standard's certified concentration has been established through an unbroken chain of measurements linked to the National Institute of Standards and Technology's reference values. For regulatory compliance and quality assurance, NIST-traceable standards provide documented, defensible proof that measurements meet an established accuracy benchmark.